This post will address one of the most important science-related concepts I think I’ve ever grasped: No evidence is not the same as evidence against.

“Whatever do you mean, Dr. Galloswag?!” exclaims you.

Okay – let’s think about Facebook use in relation to anxiety. Facebook has been accessible to the unwashed masses since 2006. I didn’t sign up until I began undergrad in Fall 2007. #ancient Pretend my mum was nervous about the idea of me joining Facebook.

***

Mum of Cgallo: “I don’t know sweetums, it just seems like having that much interaction with random people without actually seeing them face-to-face could be bad for your mental health.”

Young Cgallo: “There is no evidence that Facebook use is linked to anxiety, Mum! Get out of my face!”

***

Guess what? Young Cgallo was technically right – at the time there wasn’t any scientific evidence. When I entered the search terms “Facebook, anxiety” into PubMed ( a database of life-science / biological articles), the earliest search result was from 2009, and the earliest might-be-relevant search result was dated 2013.

Why this delay? Because it took a while for older adults to realize how impactful Facebook was. Because research is slow. And so there was no evidence because, well, no one had looked for it. But now there are articles galore on the relationship between Facebook use – and other forms of social media – in relation to anxiety.

So Cgallo’s Mum in this imaginary situation was vindicated over time!

Takeaway #1: Sometimes someone can be technically correct that there is “no evidence” — but that’s because no one has published data on the topic at all!

Now, let’s imagine another scenario — what if there had been multiple studies of Facebook and anxiety, but most or all of the studies reported no significant correlation between Facebook use and anxiety. That is much more informative than there simply being no data at all… but it’s only moderately in favor of young Cgallo. When a study doesn’t find a relationship it could be because …

- There is not a correlation between the variables of interest (in this case, Facebook use and anxiety)

OR…

- Power issues: The study did not include enough participants to detect meaningful differences if they were there.

- Operational-definition issues: The study defined anxiety in a funky way. One study might decide to look at Facebook use in relation to being diagnosed with an anxiety disorder by a therapist, another in relation to scoring high on a standardized anxiety scale, yet another in relation to self-reported feelings of stress.

- Time-scale issues: The study could have looked at the effect of Facebook use over the course of a week and found no correlation to anxiety. That still doesn’t tell us much about the effect of Facebook use over several years.

Takeaway #2: Sometimes someone can be technically correct that there is “no evidence” — but that’s because all the studies conducted on the issue of interest had design issue(s).

I remember the first time I really thought about this in graduate school, and it’s actually pretty frustrating. But there is almost always going to be another, usually different/better way a researcher could approach a research question. So often times, a lack of evidence means absolutely nothing meaningful IRL.

“What are you getting at here, Cgallo? Are you trying to suggest that we can never really say with confidence that two things are not related to each other??” demands you.

“Mais non!” Cgallo sputters.

For one, if there are many good quality (e.g. large sample size, good operational definitions, relevant time scales) studies conducted on an issue and none of them find an association, that’s a pretty good clue that there may not actually be a relationship between Facebook use and anxiety, or whatever you’re interested in (autism and vaccine *cough cough*).

But let’s contrast all of this with the gold standard: evidence against!

What do I mean? Well, many researchers are terrified of publishing mumbo jumbo, so they err on the side of caution and choose statistical tests that are more likely to give false negatives than false positives (I may go more into what this means in a future post, if it pleases the queen). As a result of this statistical conservatism (teehee), it’s quite difficult to get results that say “yes! Thing 1 is related to thing 2 in a meaningful way!” *SO* if you really want to argue that there’s no relationship between Facebook use and anxiety, find evidence against this statement. How? Well, what if there was an entire body of research pointing to Facebook use being linked to feelings of calm, tranquility, peace, stability, happiness, etc.? That is very different – and in my opinion, much more meaningful – than a simple absence of evidence.

Takeaway #3: The absence of evidence for something (e.g. Facebook use being anxiogenic) is not nearly as powerful as evidence for the opposite (e.g. Facebook use being anxiolytic).

Great! I think we all feel better now! Make sure you share this article on Facebook!

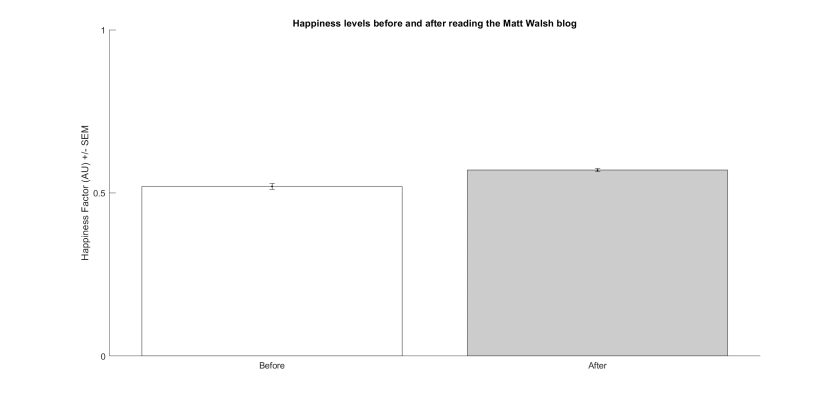

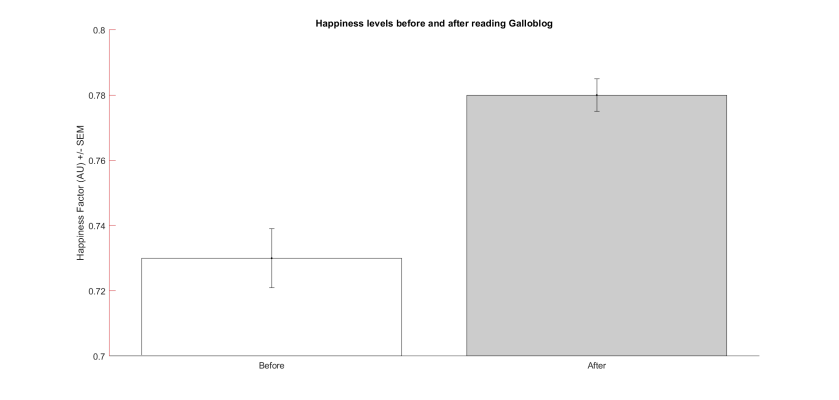

Now, “zooming” in on the differences between groups isn’t always a shady scientific practice, but when evaluating the quality / importance of the data presented it’s important to have an understanding of what the possible range of scores actually is.

Now, “zooming” in on the differences between groups isn’t always a shady scientific practice, but when evaluating the quality / importance of the data presented it’s important to have an understanding of what the possible range of scores actually is.